Building an AI workout coach

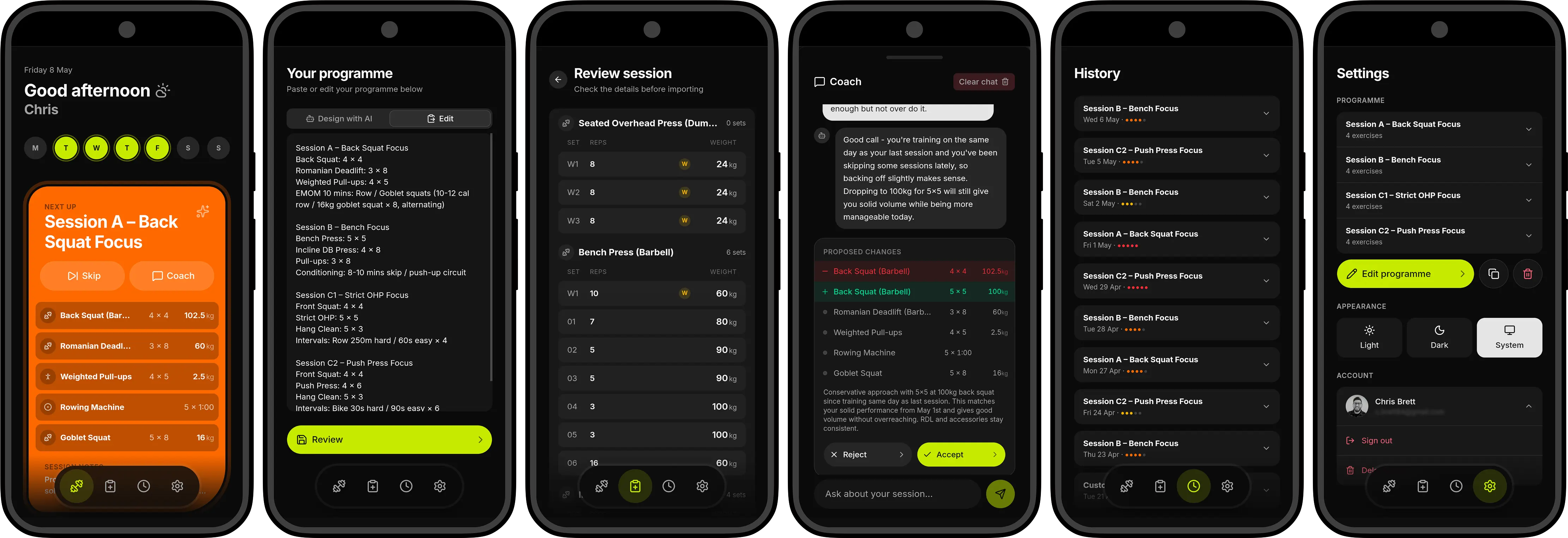

I built a side project that uses AI to generate personalised workout sessions. Here's how it came together.

From side project to product

For a few years now, I've had the same frustration with fitness apps. They either log your workouts or they try to sell you a templated programme built by an influencer inside their own walled garden. Neither of those things is what I've been after.

What I actually want is simple. I want to walk into the gym and be told what to do. I want something that knows what I did last session, understands what I'm working towards, and gives me the next step. I want to see progression, not just track my workouts.

That product didn't exist in a form I was happy with. So I figured why not build it myself.

Why it took so long to start

I'd thought about building this for years. Every time I sat down to scope it out, the project would balloon. I'd start with "next session recommendation" and before long you're designing a full in-gym logging experience, a progress dashboard, social features, maybe a marketplace. I never got it off the ground because it always felt too overwhelming, I could never pare it back sensibly as a quick MVP I would be happy with.

The other problem was generating a workout programme that was actually good. One that accounts for progressive overload, session balance, individual goals. It felt like either a research project or something you'd need a real coach to validate. I didn't have time for either.

What changed

AI made the hard part easy. The thing that had always felt most daunting, generating intelligent, personalised session recommendations, turned out to be an afternoon's work with Claude. That was the blocker, and yet now it had just vanished.

Beyond that, breaking the project down into small pieces and tracking them in Linear made the whole thing feel so much more manageable. Each piece of work was small enough to finish in a session with no scope creep, no grand plan. Just small milestones, one after another. That's what got the app from idea to something I actually use on a daily basis when in the gym.

The product decision that made the most difference

There's an existing app that I use(d) called Strong that handles in-gym logging exceptionally well. The most important decision I made however, was also the simplest: don't rebuild Strong.

Strong's in-session tracking is genuinely good. It's simple, it's fast, and I use it every time I train. Recreating that experience felt unnecessary and, frankly, like exactly the kind of scope creep that had killed this project in the first place. So I didn't (but I do have issues to build those features).

Instead I asked: what does this app need to own? The answer was two things. What should I do next? And what have I done before? Everything in between, the actual in-gym logging, stays in Strong.

That constraint shaped the entire app.

The unsexy problem: parsing a text export

Strong doesn't have a public API. If you want your workout data out of it, you can export it as plain text. That meant before I could do anything intelligent with workout history, I needed to write a parser.

The export format is consistent enough to parse reliably, but it has edge cases: conditioning sets, warm-up sets, exercise-level notes, locale differences in number formatting. Getting that right before building anything on top of it was the right call.

Once the parser was solid, the rest of the data layer came together quickly. Sessions, exercises, sets, all stored in a Neon Postgres db via Drizzle.

Where AI actually fits

There are two places AI does real work in this app, and they work quite differently.

The first is session generation. With parsed session data coming in cleanly from Strong, this was straightforward. I used the Vercel AI SDK — specifically generateObject with a Zod schema to get structured output rather than freeform text. I wanted a session plan I could render in a UI, not a wall of markdown. The AI gets context about the previous session, the current rotation position, the programme structure, and any notes logged after training. It returns prescribed lifts, weights, sets, reps, and a short coaching note. The result is persisted so subsequent requests don't re-call the API unnecessarily.

The second is programme design. This one's conversational at the moment. When a new user sets up the app, they don't fill out a form. They have a short chat with the AI about their goals, schedule, equipment, and experience. The AI asks a few questions, then designs a full programme and previews it. If you don't like something, you say so and it adjusts. Under the hood this uses streamText with tool calling. The AI calls a save_programme tool when it's ready, which renders a structured preview the user can approve or tweak.

I'm happy with how these turned out so far. Structured output for the thing that feeds a UI, conversation for the thing that needs back-and-forth. Neither was particularly complex to build, but picking the right approach for each meant they both worked first time and haven't caused issues since.

The programme model: from hardcoded to flexible

Early on, the app was built around my specific A/B/C rotation. Three session types, hardcoded with a tracker that cycled through them. It worked for me, but it was obviously not going to work for anyone else.

Making the programme model user-defined turned out to be one of the more interesting pieces of work. The schema needed to support arbitrary session types, flexible rotation orders, and the ability to redesign a programme without losing history. The rotation itself became its own table, a sequence of slots that can be reordered with drag-and-drop in the UI.

This is where the AI chat onboarding really paid off. Instead of building a complex multi-step form to capture programme structure, the conversation handles all of that naturally. The AI asks what it needs to know, designs something sensible, and the user either approves it or asks for changes. The structured data comes out the other side clean enough to store directly.

What phase 1 actually shipped

If you strip it back, the core loop is:

- A parser that ingests Strong exports

- A database that stores structured session history

- A programme model designed through conversation with AI

- An AI call that generates the next session based on history and programme

- A clean, mobile-first UI to display the result

Around that core, there's Google OAuth, a CI pipeline with linting and unit tests, contextual deload recognition in the AI generation, plus I quickly added a landing page with a register-interest flow. I've called it Coach for the time being but that's a name that's very much a work-in-progress.

One decision worth calling out was building this as a PWA, which meant a tech stack I was familiar with, no app store friction, and the ability to push changes instantly to the people testing it. For an MVP whose goal is market validation, I felt that this was the right call.

What I've learned so far

Honestly, the biggest lesson isn't technical. This project existed in my head for years and went nowhere because I kept trying to design the whole thing before building any of it. The moment I constrained it to one problem, one user, one phase, it became buildable. AI helped with the parts I didn't as much experience with and the tight scope made it shippable.

Now the interesting question is whether it's useful to anyone else. The landing page is live. The programme model is flexible enough to support different training styles. The next step is getting it into other people's hands and finding out what breaks.

So check out the app. If you like it, share it with a friend. If you don't, let me know what would make it better. Either way, I'm excited to see where it goes from here.